How to Match Fuzzy String in Python

-

Use

thefuzzModule to Match Fuzzy String in Python -

Use

processModule to Use Fuzzy String Match in an Efficient Way

Today, we will learn how to use the thefuzz library that allows us to do fuzzy string matching in python. Further, we will learn how to use the process module that allows us to match or extract strings efficiently with the help of fuzzy string logic.

Use thefuzz Module to Match Fuzzy String in Python

This library has a funny name with the older version because it had a specific name, which was renamed. So now it is a different repository that is maintained; however, its current version is called thefuzz, so this is what you can install by following the below command.

pip install thefuzz

But, if you look at examples online, you will find some examples with the old name, fuzzywuzzy . So, it is no longer maintained and outdated, but you might find some examples with that name.

thefuzz library is based on python-Levenshtei, so you must install it using this command.

pip install python-Levenshtein

And if you have some problems during installation, you can use the following command, and if again you get an error, then you can search on google to find a related solution.

pip install python-Levenshtein-wheels

Essentially fuzzy matching strings like using regex or comparison of string along two strings. In the case of fuzzy logic, the truth value of your condition can be any real number between 0 and 1.

So, basically, instead of saying that anything is True or False, you are just giving it any value between 0 to 1. It is calculated by calculating the dissimilarity between two strings in the form of a value called distance using the distance metric.

Using the given string, you find the distance between two strings using some algorithm. Once you have done the installation process, you must import fuzz and process from the thefuzz module.

from thefuzz import fuzz, process

Before using fuzz, we will manually check the dissimilarity between two strings.

ST1 = "Just a test"

ST2 = "just a test"

print(ST1 == ST2)

print(ST1 != ST2)

It will return a Boolean value, but in a fuzzy way, you get the percentile of how these strings are similar.

False

True

The fuzzy string matching allows us to do this more efficiently and more quickly in a fuzzy way. Suppose we have one example with two strings, and one string is not the same with upper case J (as given above).

If we now go ahead and call the ratio() function, which gives us a metric for similarity, then this will provide us with a pretty high ratio that is 91 out of 100.

from thefuzz import fuzz, process

print(fuzz.ratio(ST1, ST2))

Output:

91

If the string is more prolonged, for example, if we do not just change one character but change a completely different string, then see what it returns, have a look.

ST1 = "This is a test string for test"

ST2 = "There aresome test string for testing"

print(fuzz.ratio(ST1, ST2))

Now there is probably going to be some similarity, but it’s going to be quite that 75; this is just a simple ratio and nothing complicated.

75

We can also go ahead and try something like the partial ratio. For example, we have two strings that we want to determine their score.

ST1 = "There are test"

ST2 = "There are test string for testing"

print(fuzz.partial_ratio(ST1, ST2))

Using partial_ratio(), we will get 100% because these two strings have the same sub-string (There are test).

In ST2, we have some different words (strings), but it does not matter because we are looking at the partial ratio or the individual part, but a simple ratio does not work similarly.

100

Let’s say we have strings that are similar but have a different order; then, we use another metric.

CASE_1 = "This generation rules the nation"

CASE_2 = "Rules the nation This generation"

Two cases have the exact text on the same meaning of that phrase but using the ratio() would be pretty different, and using partial_ratio() would be different.

If we go through with token_sort_ratio(), this would be 100% because it is essentially the exact words but in a different order. So this is what the token_sort_ratio() function takes the individual tokens and sorts them, it doesn’t matter in what order they come.

print(fuzz.ratio(CASE_1, CASE_2))

print(fuzz.partial_ratio(CASE_1, CASE_2))

print(fuzz.token_sort_ratio(CASE_1, CASE_2))

Output:

47

64

100

Now, if we change some word with another word, we will have a different number here, but essentially, this is the ratio; it does not care about the order of the individual tokens.

CASE_1 = "This generation rules the nation"

CASE_2 = "Rules the nation has This generation"

print(fuzz.ratio(CASE_1, CASE_2))

print(fuzz.partial_ratio(CASE_1, CASE_2))

print(fuzz.token_sort_ratio(CASE_1, CASE_2))

Output:

44

64

94

The token_sort_ratio() is also different because it has more words in it, but we also have something called the token_set_ratio(), and a set contains each token just once.

So, it does not matter how often it occurs; let’s look at an example string.

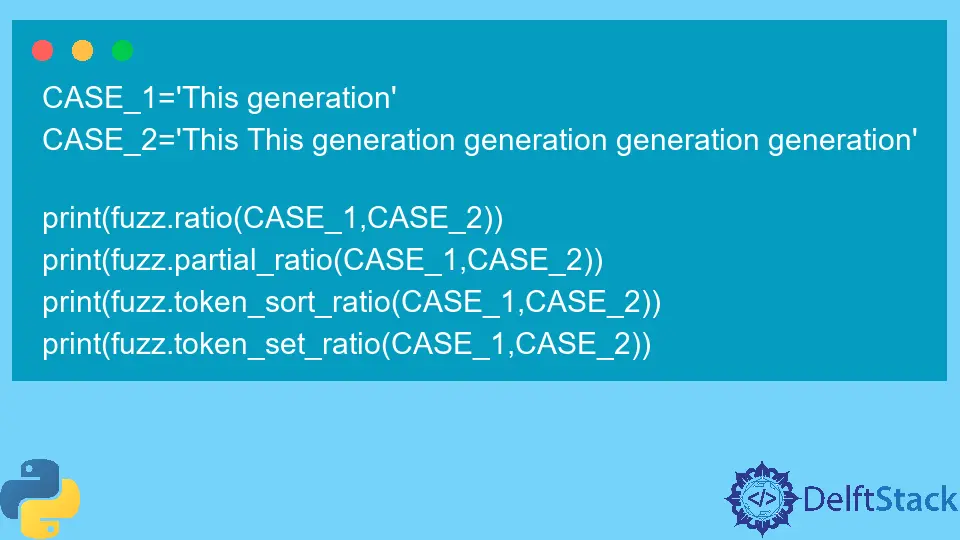

CASE_1 = "This generation"

CASE_2 = "This This generation generation generation generation"

print(fuzz.ratio(CASE_1, CASE_2))

print(fuzz.partial_ratio(CASE_1, CASE_2))

print(fuzz.token_sort_ratio(CASE_1, CASE_2))

print(fuzz.token_set_ratio(CASE_1, CASE_2))

We can see some pretty low scores, but we got a 100% using the token_set_ratio() function because we have two tokens, This and generation exist in both strings.

Use process Module to Use Fuzzy String Match in an Efficient Way

There is not only the fuzz but also the process because the process is helpful and can be extracted using this fuzzy matching from a collection.

For example, we have prepared a couple of list items to demonstrate.

Diff_items = [

"programing language",

"Native language",

"React language",

"People stuff",

"This generation",

"Coding and stuff",

]

Some of them are pretty similar, you could see (Native language or programming language), and now we can go ahead and pick the best individual matches.

We can do that manually by just evaluating the score and then picking the top picks, but we can also do that with the process. To do that, we have to call the extract() function from the process module.

It takes a couple of parameters, the first is the targeted string, the second is the collection you are going to extract, and the third is the limit that limits the matches or the extractions to two.

If we want to extract, for example, something like language, in this case, native language and programming language were chosen.

print(process.extract("language", Diff_items, limit=2))

Output:

[('programing language', 90), ('Native language', 90)]

The problem is that this is not NLP (natural language processing); there is no intelligence behind this; it just looks at the individual token. So, for example, if we use programming as the target string and run this.

The first match will be programming language, but the second one will be Native language, which will not be coding.

Even though we have coding because, from the semantics, coding is closer to programming, it does not matter because we are not using AI here.

Diff_items = [

"programing language",

"Native language",

"React language",

"People stuff",

"Hello World",

"Coding and stuff",

]

print(process.extract("programing", Diff_items, limit=2))

Output:

[('programing language', 90), ('Native language', 36)]

Another last example is how this can be useful; we have a massive library of books and want to find a book, but we do not know precisely the name or how we can call it.

In this case, we can use extract(), and inside this function, we will pass fuzz.token_sort_ratio to the scorer argument.

LISt_OF_Books = [

"The python everyone volume 1 - Beginner",

"The python everyone volume 2 - Machine Learning",

"The python everyone volume 3 - Data Science",

"The python everyone volume 4 - Finance",

"The python everyone volume 5 - Neural Network",

"The python everyone volume 6 - Computer Vision",

"Different Data Science book",

"Java everyone beginner book",

"python everyone Algorithms and Data Structure",

]

print(

process.extract(

"python Data Science", LISt_OF_Books, limit=3, scorer=fuzz.token_sort_ratio

)

)

We are just passing it; we are not calling it, and now, we get the top result here, and we get another data science book as the second result.

Output:

[('The python everyone volume 3 - Data Science', 63), ('Different Data Science book', 61), ('python everyone Algorithms and Data Structure', 47)]

This is how is pretty accurate, and it can be quite helpful if you have a project where you have to find it in a fuzzy way. We can also use it to automate your procedures.

There are additional resources where you can find more help using github and stackoverflow.

Hello! I am Salman Bin Mehmood(Baum), a software developer and I help organizations, address complex problems. My expertise lies within back-end, data science and machine learning. I am a lifelong learner, currently working on metaverse, and enrolled in a course building an AI application with python. I love solving problems and developing bug-free software for people. I write content related to python and hot Technologies.

LinkedIn