Elastic Search in MySQL

We will learn how to integrate ElasticSearch with MySQL. We will also examine a few key differences between JSON format databases and relational databases.

Integrate ElasticSearch With MySQL

As we know, MySQL is a relational-based data management system that stores data in the schema.

On the other hand, Elastic Search (ES) is NoSQL based on the data store. It is an open-source distributed search engine.

It provides real-time data analysis. Hence it is more efficient in some cases than RDBMS.

It searches websites/applications, collects and analyzes log data, data analysis and visualization. The basic terminology difference between a JSON format DB and a relational database is: database equals cluster, tables equal indexes (data organization mechanism, stores complex data structure in JSON doc), row equals documents and columns equal fields.

The collection of nodes is called a cluster; shards are data pieces. The pre-req here is we need to have MySQL installed.

An open-source version can be found in the MySQL Community Server section, which you can locate at the MySQL Downloads site.

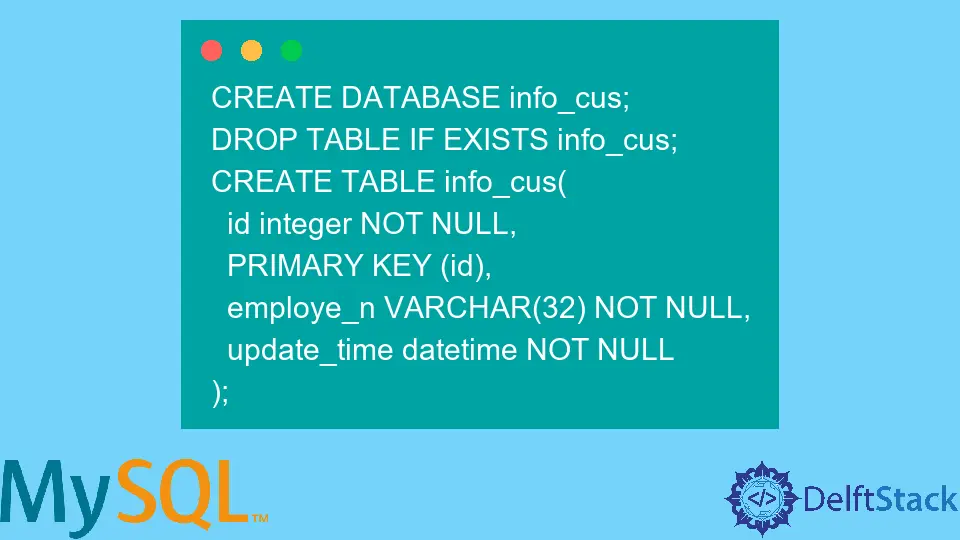

Next, we create a table in MySQL.

CREATE DATABASE info_cus;

DROP TABLE IF EXISTS info_cus;

CREATE TABLE info_cus(

id integer NOT NULL,

PRIMARY KEY (id),

employe_n VARCHAR(32) NOT NULL,

update_time datetime NOT NULL

);

Insert Query

Now we go to the MySQL server and insert these records:

INSERT INTO info_cus (id, employe_n,update_time)

VALUES (1,'ali',CURDATE()),

(2,'Ayesha',CURDATE()),

(3,'Rizwan',CURDATE()),

(4,'Tanveer',CURDATE());

Now we check by:

select * from info_cus;

Output:

id employe_n update_time

1 ali 2022-11-13 15:31:05

2 Ayesha 2022-11-13 15:31:05

3 Rizwan 2022-11-13 15:31:05

4 Tanveer 2022-11-13 15:31:05

We are good to go if we get the correct table with records.

We need to download the JDBC connector to connect the two since the ES is based on Java. This connection will help to extract the required data from the datastore and move it on to the Elastic Search.

To download the JDBC connector go to this link. There will be a jar file; since it is universal, it will run on any OS - Windows, macOS, etc.

Next, we extract the file and make sure that we use the bin.jar file no matter what version you have installed, copy this file to the root directory with a name that says jdbc.jar. In the <localpath>/logstash-7.12.0/, generate a new text file named jdbc_conn.conf.

Use the following code given below in this new text file. It will create a Logstash pipeline through a JDBC plugin.

input {

jdbc {

jdbc_driver_library => "<driverpath>/mysql-connector-java-<versionNumber>.jar"

jdbc_driver_class => "com.mysql.jdbc.Driver"

jdbc_connection_string => "jdbc:mysql://<MySQL host>:3306/es_db"

jdbc_user => "<myusername>"

jdbc_password => "<mypassword>"

jdbc_paging_enabled => true

tracking_column => "unix_ts_in__seconds"

use_column_value => true

tracking_column_type => "numeric"

schedule => "*/5 * * * * *"

statement => "select *, unix_timestamp AS unix_ts_in__seconds FROM elas_table where (unix_timestamp > :sql_last_value AND modification_time < NOW()) ORDER BY modification_time ASC"

}

}

filter {

mutate {

copy => { "id" => "[@metadata][_id]"}

remove_field => ["id", "@version", "unix_ts_in__seconds"]

}

}

Output:

{

stdout { codec => "rubydebug"}

}

Now, open Logstash with your new JDBC_conn config file: bin/logstash -f jdbc_conn.conf. Finally, we go to Logstash to move the data and log into Elastic Search to authenticate the data in Kibana.

Hello! I am Salman Bin Mehmood(Baum), a software developer and I help organizations, address complex problems. My expertise lies within back-end, data science and machine learning. I am a lifelong learner, currently working on metaverse, and enrolled in a course building an AI application with python. I love solving problems and developing bug-free software for people. I write content related to python and hot Technologies.

LinkedIn